Approx. read time: 9.4 min.

Post: AI and Machine Learning Exploit, Deepfake Videos, Now Harder to Detect

AI and Machine Learning Exploit, Deepfake Videos, Now Harder to Detect. Deepfake videos are enabled by machine learning and data analytics. They’re also highly believable, and now easier and cheaper than ever to produce while simultaneously being harder to detect.

As we head into the next presidential election campaign season, you’ll want to beware of the potential dangers that fake online videos bring through the use of artificial intelligence (AI) and machine learning (ML). Using AI software, people can create deepfake (short for “deep learning and fake”) videos in which ML algorithms are used to perform a face swap to create the illusion that someone either said something that they didn’t say or are someone they’re not. Deepfake videos are showing up in various arenas, from entertainment to politics to the corporate world. Not only can deepfake videos unfairly influence an election with false messages, but they can bring personal embarrassment or cause misleading brand messages if, say, they show a CEO announcing a product launch or an acquisition that actually didn’t happen.

Deepfakes are part of a category of AI called “Generative Adversarial Networks” or GANs, in which two neural networks compete to create photographs or videos that appear real. GANs consist of a generator, which creates a new set of data like a fake video, and a discriminator, which uses an ML algorithm to synthesize and compare data from the real video. The generator keeps trying to synthesize the fake video with the old one until the discriminator can’t tell that the data is new.

As Steve Grobman, McAfee’s Senior Vice President and Chief Technology Officer (CTO), pointed out at the RSA Conference 2019 in March in San Francisco, fake photographs have been around since the invention of photography. He said altering photos has been a simple task you can perform in an application such as Adobe Photoshop. But now these types of advanced editing capabilities are moving into video as well, and they’re doing it using highly capable and easily accessed software tools.

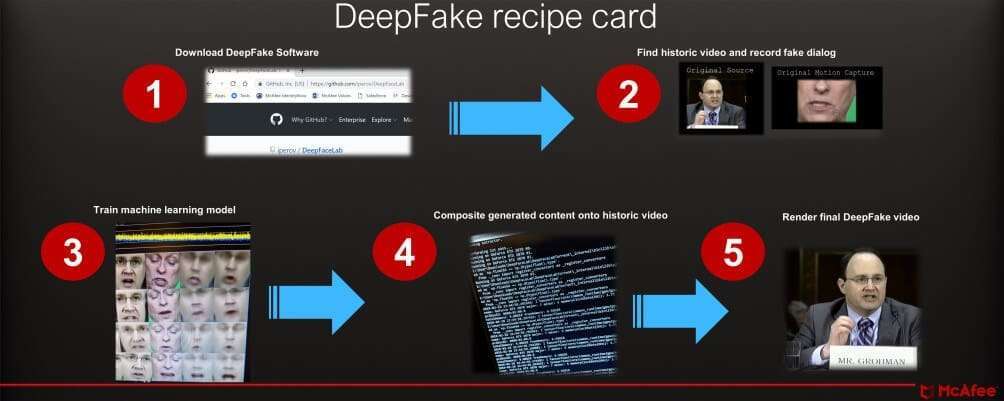

How Deepfakes Are Created

Although understanding AI concepts is helpful, it’s not necessary to be a data scientist to build a deepfake video. It just involves following some instructions online, according to Grobman. At the RSA Conference 2019 (see video above), he unveiled a deepfake video along with Dr. Celeste Fralick, Chief Data Scientist and Senior Principal Engineer at McAfee. The deepfake video illustrated the threat this technology presents. Grobman and Fralick showed how a public official in a video saying something dangerous could mislead the public to think the message is real.

To create their video, Grobman and Fralick downloaded deepfake software. They then took a video of Grobman testifying before the US Senate in 2017 and superimposed Fralick’s mouth onto Grobman’s.

“I used freely available public comments by [Grobman] to create and train an ML model; that let me develop a deepfake video with my words coming out of [his] mouth,” Fralick told the RSA audience from onstage. Fralick went on to say that deepfake videos could be used for social exploitation and information warfare.

To make their deepfake video, Grobman and Fralick used a tool a Reddit user developed called FakeApp, which employs ML algorithms and photos to swap faces on videos. During their RSA presentation, Grobman explained the next steps. “We split the videos into still images, we extracted the faces, and we cleaned them up by sorting them and cleaned them up in Instagram.”

Python scripts allowed the McAfee team to build mouth movements to have Fralick’s speech match Grobman’s mouth. Then they needed to write some custom scripts. The challenge in creating a convincing deepfake is when characteristics like gender, age, and skin tone don’t match up, Grobman said.

He and Fralick then used a final AI algorithm to match the images of Grobman testifying before the Senate with Fralick’s speech. Grobman added that it took 12 hours to train these ML algorithms.

Mcafee Outlined The Steps It Took To Create A Deepfake Video Shown At The 2019 Rsa Conference. It Used Deepfake Software Called Fakeapp And Training Of Ml Models To Alter Video Of Grobman With Speech From Fralick. (Image Credit: Mcafee).

The Consequences of Deepfakes

Hacker-created deepfake videos have the potential to cause many problems—everything from government officials spreading false misinformation to celebrities getting embarrassed from being in videos they really weren’t in to companies damaging competitors’ stock market standings. Aware of these problems, lawmakers in September sent a letter to Daniel Coats, US Director of National Intelligence, to request a review of the threat that deepfakes pose. The letter warned that countries such as Russia could use deepfakes on social media to spread false information. In December, lawmakers introduced the Malicious Deep Fake Prohibition Act of 2018 to outlaw fraud in connection to “audiovisual records,” which refer to deepfakes. It remains to be seen if the bill will pass.

As mentioned, celebrities can suffer embarrassment from videos in which their faces have been superimposed over porn stars’ faces, as was the case with Gal Gadot. Or imagine a CEO supposedly announcing product news and sinking the stock of a company. Security professionals can use ML to detect these types of attacks, but if they’re not detected in time, they can bring unnecessary damage to a country or a brand.

“With deepfakes, if you know what you’re doing and you know who to target, you can really come up with a [very] convincing video to cause a lot of damage to a brand,” said Dr. Chase Cunningham, Principal Analyst at Forrester Research. He added that, if you distribute these messages on LinkedIn or Twitter or make use of a bot form, “you can crush the stock of a company based on total bogus video without a while lot of effort.”

Through deepfake videos, consumers could be tricked into believing a product can do something that it can’t. Cunningham noted that, if a major car manufacturer’s CEO said in a bogus video that the company would no longer manufacture gas-powered vehicles and then spread that message on Twitter or LinkedIn in that video, then that action could easily damage a brand.

“Interestingly enough from my research, people make decisions based on headlines and videos in 37 seconds, Cunningham said. “So you can imagine if you can get a video that runs longer than 37 seconds, you can get people to make a decision based on whether [the video is] factual or not. And that’s terrifying.”

Since social media is a vulnerable place where deepfake videos can go viral, social media sites are actively working to combat the threat of deepfakes. Facebook, for example, deploys engineering teams that can spot manipulated photos, audio, and video. In addition to using software, Facebook (and other social media companies) hire people to manually look for deepfakes.

“We’ve expanded our ongoing efforts to combat manipulated media to include tackling deepfakes,” a Facebook representative said in a statement. “We know the continued emergence of all forms of manipulated media presents real challenges for society. That’s why we’re investing in new technical solutions, learning from academic research, and working with others in the industry to understand deepfakes and other forms of manipulated media.”

Not All Deepfakes Are Bad

As we have seen with the educational deepfake video by McAfee and the comedic deepfake videos on late night TV, some deepfake videos are not necessarily bad. In fact, while politics can expose the real dangers of deepfake videos, the entertainment industry often just shows deepfake videos’ lighter side.

For example, in a recent episode of The Late Show With Stephen Colbert, a funny deepfake video was shown in which actor Steve Buscemi’s face was superimposed over actress Jennifer Lawrence’s body. In another case, comedian Jordan Peeler replaced a video of former President Barack Obama speaking with his own voice. Humorous deepfake videos like these have also appeared online, in which President Trump’s face is superimposed over German Chancellor Angela Merkel’s face as the person speaks.

Again, if the deepfake videos are used for a satirical or humorous purpose or simply as entertainment, then social media platforms and even movie production houses permit or use them. For example, Facebook allows this type of content on its platform, and Lucasfilm used a type of digital recreation to feature a young Carrie Fisher on the body of actress Ingvild Deila in “Rogue One: A Star Wars Story.”

McAfee’s Grobman noted that some of the technology behind deepfakes is put to good use with stunt doubles in moviemaking to keep actors safe. “Context is everything. If it’s for comedic purposes and it’s obvious that it’s not real, that’s something that’s a legitimate use of technology,” Grobman said. “Recognizing that it can be used for all sorts of different purposes is key.”

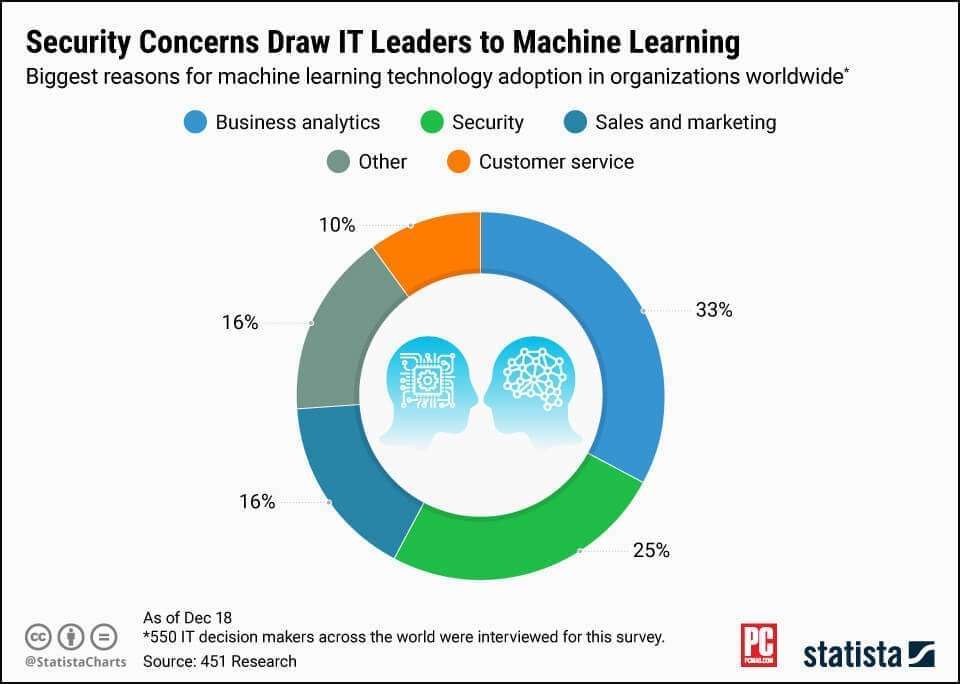

(Image Credit: Statista)

How to Detect Deepfake Videos

McAfee isn’t the only security firm experimenting with how to detect fake videos. In its paper delivered at Black Hat 2018 entitled, “AI Gone Rogue: Exterminating Deep Fakes Before They Cause Menace,” two Symantec security experts, Security Response Lead Vijay Thaware and Software Development Engineer Niranjan Agnihotri, write that they have created a tool to spot fake videos based on Google FaceNet. Google FaceNet is a neural network architecture that Google researchers developed to help with face verification and recognition. Users train a FaceNet model on a particular image and can then verify their identity during tests thereafter.

To try to stop the spread of deepfake videos, AI Foundation, a nonprofit organization that’s focused on human and AI interaction, offers software called “Reality Defender” to spot fake content. It can scan images and video to see if they have been altered using AI. If they have, they’ll get an “Honest AI Watermark.”

Another strategy is to keep the concept of Zero Trust in mind, which means “never trust, always verify”—a cybersecurity motto that means IT professionals should confirm all users are legitimate before granting access privileges. Remaining skeptical of the validity of video content will be necessary. You’ll also want software with digital analytics capabilities to spot fake content.

Looking Out for Deepfakes

Going forward, we’ll need to be more cautious with video content and keep in mind the dangers they can present to society if misused. As Grobman noted, “In the near term, people need to be more skeptical of what they see and recognize that video and audio can be fabricated.”

So, keep a skeptical eye on the political videos you watch as we head into the next election season, and don’t trust all of the videos featuring corporate leaders. Because what you hear may not be what was really said, and misleading deepfake videos have the potential to really damage our society.